The Missing Link Between Research Partnerships and Real-World Use

Pallavi and I have been sitting with a moment from a workshop we facilitated last October.

It was one of those small exchanges that should have been forgettable, but instead it kept tugging at us—because it captured something we see all the time when people talk about “research translation.”

This piece is for faculty, research leaders, and funders who care about impact—but feel stuck in a familiar pattern: partnerships feel productive, yet research outputs don’t consistently travel into practice, policy, or products.

Research translation is breaking down upstream—not because of lack of motivation, but because partnering and real-world use aren’t being designed together.

The workshop moment that revealed a translation blind spot

Many faculty sincerely believe they “do translation,” but their definition often stops at dissemination—and misses the partner/user ecosystem required for use.

In the workshop, we asked a question that sounds simple (and maybe a little confronting):

“If your research is meant to matter in the world, do you know who is benefiting from it, how they’re using it, and what would make it more usable?”

We’re asking this in a very specific context. Funding expectations are changing. NSF and many philanthropic funders are signaling—sometimes explicitly—that it’s no longer enough to say “we’ll disseminate findings.” They’re looking for credible pathways from research to use.

During a break, we ended up talking with a couple of researchers. They told us (confidently and sincerely):

Faculty: "Research translation is part of our ethos. We're social scientists."

We were genuinely glad to hear that. So we asked the obvious next question:

We: "What kind of research are you doing?"

Faculty: "We study the impact of social media on kids."

We: "That's important work." And then we asked something we've learned to ask when someone says their work is "translational": "Are you partnering with computer science faculty to understand the role of algorithms in shaping what kids actually see?"

Faculty: "Ah—we should… but we haven't."

We: "Have you partnered with families and community organizations to understand lived experience and inform what you study?"; "What about schools, youth organizations, or policymakers who might actually apply the findings?"; "Any interaction with the platforms themselves?"

They said no. They also shared that partnering with tech companies would feel out of alignment with their values, and that most of their research relied on observing what's out there or using secondary data.

This is anecdotal. We’re not sharing it to judge. The researchers were thoughtful and principled. But the exchange surfaced something we think we need to name — a distinction that matters:

Doing impactful research is not the same as creating impact with research.

The research question mattered. The values were thoughtful. But without engaging the broader ecosystem of stakeholders around that research (from families and schools to policymakers and, sometimes, even industry actors), it’s easy to miss the upstream design choices that make real-world change possible — in practice, policy, or technology.

And it also surfaced something else: Many faculty believe they “do research translation,” but their definition of translation is narrower than they realize.

And in today’s environment, that gap matters. That moment isn’t a one-off; it reveals a deeper pattern in how translation is understood.

Related reading: Partnering for Impact: How research centers can build ecosystems that last (a practical four-level approach: individual → project → institutional → societal).

The core problem: translation treated as downstream comms, not upstream design

Here’s the pattern we see: translation is often treated as an identity—“we care about impact”—instead of as a set of design choices that make impact more likely.

Which leads to the core idea that has become a kind of north star for our work:

Research translation isn’t a communications step after the research—it’s an upstream design discipline. Most failures are design failures, not dissemination failures.

It’s the choices you make early—about partners, roles, outputs, timing, incentives, and governance—that shape whether your work becomes usable in the world. And the stakes are rising, because external expectations are shifting fast.

Why this matters now: expectations have shifted, but systems haven’t

The definition of research excellence is expanding. Across public agencies, philanthropic funders, and industry partners, expectations increasingly extend beyond publication toward credible pathways from research to real-world use. Funders and partners increasingly expect credible pathways to use, but institutional systems and norms still push translation into a post-hoc activity.

For many faculty, this creates both opportunity and strain. Impact is valued, but the systems that support impact—shared frameworks, metrics, roles, time, and institutional support—remain uneven. So translation often becomes post hoc (once findings exist) rather than an upstream set of choices that shapes what research can realistically achieve. This is the core gap: research translation is no longer defined by dissemination alone, but many practices still treat it as a downstream activity. These expectations show up differently depending on who you’re accountable to—funders, communities, or industry partners—but the direction of travel is consistent. You can see this shift clearly in what different funders and partners are signaling.

The shared signal from funders and partners: design for use

While terminology varies, a consistent shift is underway: research translation is no longer defined by dissemination alone, but by intentional pathways from research to real-world use. Across public, philanthropic, and industry contexts, the shared signal is consistent: design for use, not just post-hoc packaging.

Public funders: translation as institutional capability. Across NSF programs—especially within the Technology, Innovation, and Partnerships (TIP) Directorate—translation is framed as use-inspired research supported by cross-sector partnerships and institutional infrastructure. Translation is not a single activity; it is a capability an institution builds (see NSF’s Accelerating Research Translation (ART) solicitation).

Philanthropic funders: translation as evidence use. Many foundations emphasize not just sharing research, but improving how evidence is used in decisions. Translation is embedded in co-production, mutual learning, and capacity building for evidence use (see the W.T. Grant Foundation’s research grants on improving evidence use and Spencer’s Research–Practice Partnerships).

Industry partners: translation as innovation pathways. In industry-engaged research contexts, translation is often defined in terms of innovation pathways and joint value creation. Effective translation relies on early alignment on goals, shared governance, mutually beneficial outcomes, and mechanisms for integration—not a downstream step after the research is “done” (see the National Academies’ University–Industry Demonstration Partnership (UIDP) and UIDP’s strengthen and modernize U–I partnerships).

If you zoom out, the shared signal is pretty clear:

Design for use, not post-hoc packaging

Engage users meaningfully, not as a late-stage checkbox

Make the pathway to adoption/application explicit

Look for evidence of use, learning, or uptake—not only academic outputs

And this shift matters even in the domain many people assume is “already translation”: commercialization.

In some fields, the “real-world use” pathway is commercialization—patents, licensing, startups, product development. But even here, translation is not automatic. In fact, one large-scale inventor survey study found that a substantial share of patents remained unused (∼40%).

An invention doesn’t become an innovation just because it’s patented. Adoption still requires upstream work: partnering early with the people who shape feasibility, fit, incentives, regulation, workflows, and demand.

So what does “translation” actually mean in practice—and how do we define it at Saath?

How Saath defines translation: a continuum (and a power lens)

Our approach to research translation draws on our experience facilitating more than 50 research-for-impact projects across 45 universities through the USAID-funded LASER PULSE program, as well as delivering applied research training workshops at institutions.

In our work, our research—and in the broader literature—research translation is best understood as an umbrella term for a range of approaches that vary in:

how intentionally research is designed for use, and

how researchers engage intended users throughout the research lifecycle.

Research Translation Continuum

Translation spans a continuum of approaches defined by (1) how intentionally evidence is designed for use and (2) how users/partners are engaged—while power shapes outcomes.

Related reading: From Evidence to Impact: What the Research Translation Continuum Reveals About Collaboration (a walkthrough of the continuum and what it looks like in practice).

Drawing on the Research Translation Continuum, translation approaches range from:

Proactive research translation, where planning for evidence use begins before research starts and collaboration with users is sustained through adoption; to

Post-hoc research translation, where research is translated after publication, often with limited partner engagement.

A quick clarity note (because this matters): this isn’t an argument that every project must be co-produced, industry-engaged, or commercialized. It’s an argument that translation choices should be intentional and transparent—so the approach matches the kind of use you want to enable.

Our research and experience also makes us hold another reality at the center: translation is shaped by power.

Who sets the agenda?

Whose knowledge is legitimized?

Who gets to define “impact”?

How decisions are made?

Those choices shape outcomes, whether we name them or not.

We’ve been testing this framework in real contexts, and the patterns are striking.

The Partnering–Utilization Gap: Perceived strong partnering, yet weaker research utilization

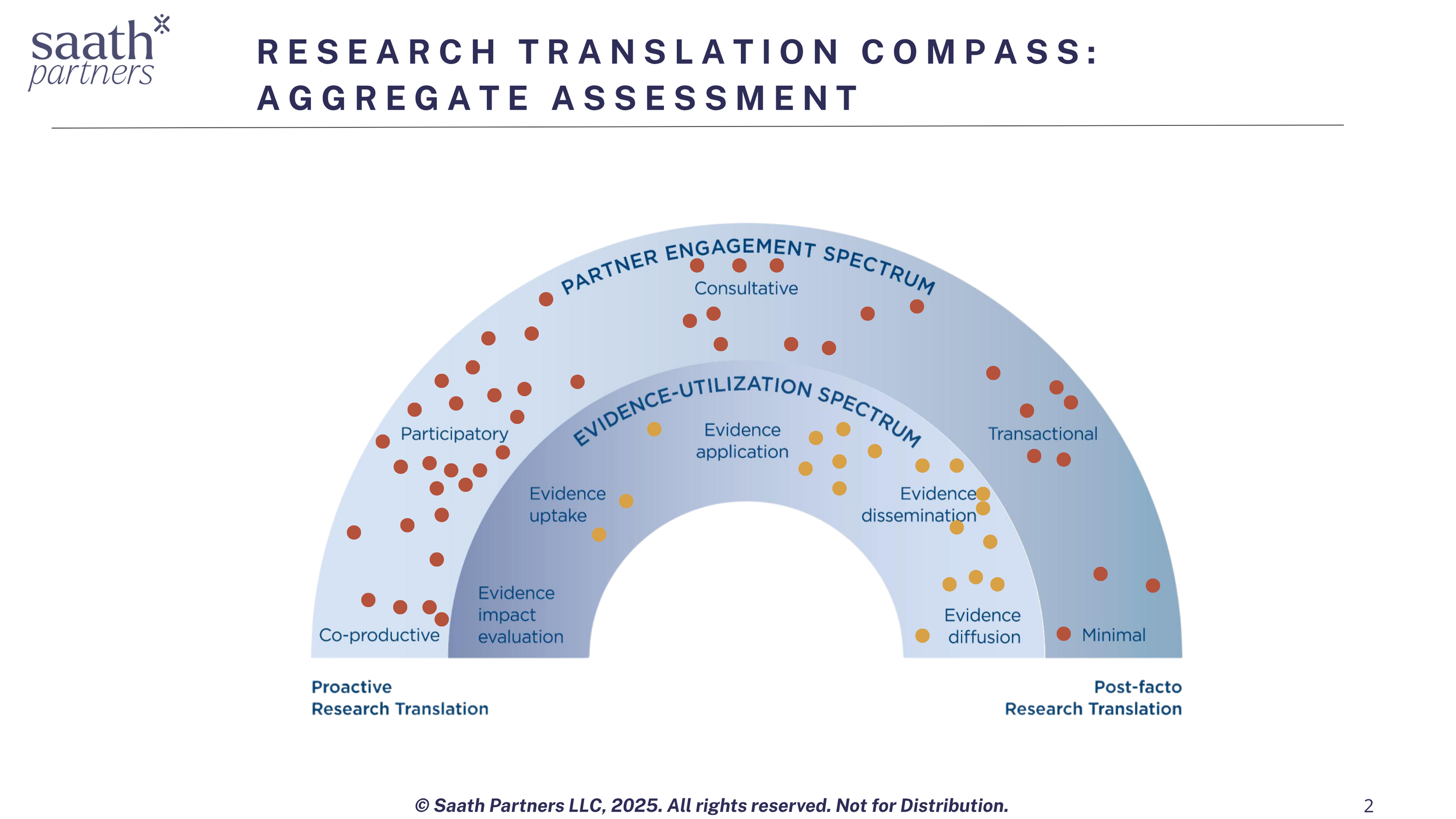

In our recent workshops, we deployed the Research Translation Compass—a Saath Partners self-assessment tool developed from our published Research Translation Continuum framework —where faculty reflect on their research-to-impact practices.

What we learned:

Researchers self-reported strong consultative, collaborative, and co-productive partnering practices.

But on the utilization spectrum, practices clustered closer to dissemination than application, adoption, or sustained use.

This surfaced a revealing dichotomy.

Partnering–Utilization Gap

Researchers may invest heavily in collaboration (and feel like they are “partnering well”), yet the work still lands closer to dissemination than to adoption and sustained use.

If partnerships are truly built on shared value, then outputs should be designed with the partner/user in mind—so they can actually be applied, adopted, scaled, or integrated into real decisions.

When that doesn’t happen, we have to be honest about what it creates: partner fatigue (“we met a lot, but nothing changed”), missed timing windows, wasted effort, and a slow erosion of trust that makes future collaborations harder.

Here’s what “designed for use” can look like in practice:

a decision-ready brief aligned to an agency’s planning cycle

a pilot protocol a community organization can run next month

an implementation guide that fits a school district workflow

a prototype with a clear adoption owner

a dashboard that shows up inside an actual decision meeting

Not just a publication or report someone might find later.

Another research finding: practitioners don’t always find outputs relevant

This pattern also showed up in our research. In a survey of researchers and practitioners with experience in cross-sector collaboration, a large percentage of practitioners reported that the outputs from collaborations did not feel relevant to practice—and many perceived themselves as less likely to collaborate with researchers in the future.

That’s a signal—not about researcher intent, but about translation design.

To close the Gap, we have to name where translation reliably breaks down.

Why translation fails: the structural bottlenecks, not the motivation

The Partnering–Utilization Gap isn’t mysterious. It’s the predictable outcome of a few recurring breakdown points. The Gap persists because of predictable breakdown points: misalignment, timing mismatches, under-resourced translation labor, weak clarity on use, and dissemination overweight.

Across our research, participant surveys, assessments, trainings, and advisory work with academics and research users—including open-text survey data from the USAID-funded LASER PULSE consortium (800+ responses on research translation and partnership challenges)—we see a consistent set of challenges. These are widespread, often unintentional, and cut across disciplines and funding contexts:

Misaligned expectations (you find out too late you’re not building the same thing). Teams start with shared enthusiasm, but not shared specificity. People use the same words—“impact,” “partner,” “deliverable,” “success”—while meaning different things. That’s why misalignment often only surfaces at the moment of friction: when a partner asks for something the research team didn’t plan to produce, or when the team realizes the partner can’t actually use what’s being generated. Structurally, this happens because projects are rewarded for getting funded and started, not for investing time in early co-definition (roles, decision rights, scope boundaries, and what “done” means).

What we often hear: “I thought you were going to deliver X,” “We assumed you’d handle adoption,” “We didn’t realize we needed approval from XYZ.”

Time & institutional rhythm mismatches (the calendars don’t line up). Academic timelines (semester cycles, IRB, grant milestones, graduate student turnover, publication cadence) rarely align with practitioner and industry timelines (budget cycles, procurement, policy windows, product roadmaps, community trust-building, urgent operational needs). The result is predictable: a project can be “on track” academically while missing the moment when a decision had to be made. Structurally, this happens because universities are optimized for rigor and continuity of inquiry—while partner systems are optimized for service delivery, risk management, and speed.

What we often hear:“By the time the findings were ready, we’d already made the decision,” “We can’t wait 9 months for that,” “We can’t commit staff time during peak season.”

Under-recognized translation labor (the work between the work). Translation isn’t just making a brief or holding a meeting—it’s the ongoing project infrastructure that makes use possible: convening, facilitation, governance, decision-making, documentation, sensemaking across disciplines, handoffs, follow-through, and relationship management when priorities shift. In most collaborations, this work is treated as “extra” rather than as core delivery. Structurally, it falls through the cracks because it doesn’t map cleanly to academic credit/ incentives (papers, grants, tenure signals) and it’s rarely budgeted as a real line item with accountable ownership.

What we often hear: “We keep meeting but nothing moves,” “No one owns the follow-through,” “We didn’t budget for someone to coordinate this.”

Fragile clarity on use (no shared picture of the adoption pathway). Even when teams agree on outputs, they often haven’t answered the practical use questions: Who will use this? Where will it live? When will it be used (which decision moment)? What has to change for it to be adopted (workflow, incentives, capacity, approvals)? Without this shared “use logic,” outputs get produced but don’t land inside a real decision or operating system. Structurally, this is common because many projects are framed around knowledge production rather than adoption design—and because “use” sits outside most academic training and institutional processes.

What we often hear: “We assumed they’d take it from here,” “We don’t know who the owner is on their side,” “It’s helpful, but we can’t operationalize it.”

Dissemination overweight (publishing becomes the finish line). A project can be excellent at producing outputs—papers, webinars, toolkits, dashboards—and still be weak at uptake, iteration, and sustained use. Dissemination is visible and measurable; adoption is slower, contextual, and requires ongoing partner-side change. Structurally, institutions fund and reward deliverables, not integration: there’s often no time, mandate, or resourcing for pilots, implementation support, product management, training, or learning loops after “publication.”

What we often hear: “We shared it widely, but it didn’t change anything,” “The report was great, but it’s sitting on a shelf,” “We needed implementation support, not just a deliverable.”

Together, these challenges point to a core insight: research translation failures are rarely about faculty motivation or research quality; they stem from systems that were not designed for use-inspired research.

Related reading :Why Collaboration Between Academics and Practitioners Matters Now? (why collaboration matters, what’s in the way, and why it’s urgent).

The takeaway: build translation into the design (at individual and institutional levels)

If the breakdowns above feel familiar, the “fix” isn’t a better infographic at the end. The fix is earlier decisions: clarity on who the work is for, what it has to land in, and who has to do which parts of the adoption work.

Believing in translation isn’t enough—translation capability is built by making upstream design choices and supporting structures that make use possible.

In other words, closing the Partnering–Utilization Gap is less about motivation and more about building translation capability—at the level of individuals, teams, and institutions.

Stepping back, this is why that workshop moment still matters: it surfaced that translation is not an ethos—it’s a design discipline. And design choices are something we can change.

What to do next?

A simple place to start is to ask one upstream question that makes the pathway to use explicit.

For individual faculty / research teams:

“Where is our work currently sitting on the Research Translation Continuum—and are we seeing a Partnering–Utilization Gap (strong collaboration, weaker uptake/use)? What upstream design choice would most reduce that gap in our next project?”

If you want a structured way to answer this, reach out to Saath Partners for the Research Translation Compass assessment (link coming soon).

For research leaders (center directors, department chairs, associate deans):

“What is the most common breakdown point in our portfolio (misalignment, timing, translation labor, clarity on use, or dissemination overweight)—and what would we change institutionally to prevent it from recurring?”

For funders and institutional sponsors

“Are we funding (and evaluating) a credible pathway to use—or mostly funding outputs that can be disseminated, with adoption left implicit?”

If you’re grappling with any of these questions, Saath Partners supports faculty, research leaders, and funders in making translation designable: clarifying intended users and use contexts, strengthening partnering strategy and governance, and building institutional translation capability through tools, assessments, and facilitated working sessions.